“The future of computing is parallel.” This isn’t simply a slogan; it captures the philosophy that has transformed our thinking toward sophisticated computing problems. CUDA from NVIDIA is the technology that from parallel processing images changed the Graphics Processing Units (GPUs) into supercomputing devices. The answer often lies in NVIDIA CUDA, if you are wondering how hyper-realistic video games, modern AI, or even scientific simulations accomplish their astounding feats.

In this post, we will learn what is NVIDIA CUDA? explaining what it is and how it works while justifying why it has become an essential tool for diverse industries. It will also explain the interesting architecture of NVIDIA GPUs, describing the specialized cores and moreover, explaining about CUDA in practical terms.

The Genesis of a Revolution: Why Was CUDA Born?

The brain of computers was dominated for decades by the Central Processing Unit (CPU), and remained undisputed. Optimized for completing many different tasks one after the other in sequence, these processors are extremely versatile. They are highly complex in logic reasoning, and also good at making decisions, so are single-threaded efficient masters.

Nevertheless, another category of computer problems surfaced, particularly in graphics. Calculations and rendering videos require numerous, often billions of rendering per frame on an extremely pixelated level. Rendering frame by frame using prior methods would take too long. This is why Graphics Processing Units, or GPUS, were designed as specialized hardware that perform a great amount of simple, repetitive tasks at once, in parallel and not sequential.

GPUS were rigid and focused mainly on rendering graphics, which left them vulnerable for change. NVIDIA’s failure to utilize these parallel processing engines gave rise to some of the leading innovations in computer graphics; by repurposing the thousands of processing units designated for graphics, NVIDIA could change the whole industry. The Compute Unified Device Architecture was brought to life in 2006 and pushed the boundaries of computation and graphics.

It’s like saying that CUDA didn’t simply focus on the speed of rendering for the GPUs CUDA aimed at turning GPUs into devices meant for parallel computing. It did so by giving a software layer, a set of tools, and necessary programming models which helped developers to access NVIDIA’s raw computational power tethered for wide-ranging scientific, engineering, and data-intensive programs. It revolutionized computing as developers were now able to accelerate computing applications multifold as they were able to utilize parallel processing capabilities of the CUDA enabled GPUs, all the while, the orderly parts of the workload continued to run seamlessly on the CPU.

Know More : What is CUDA?

NVIDIA GPUs Understanding for CUDA’s Multitude of Cores

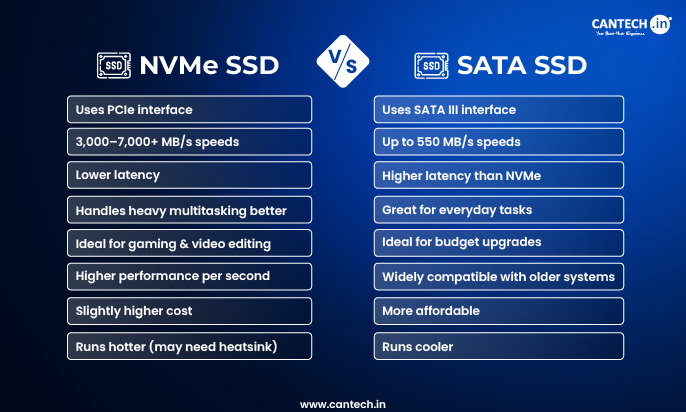

It is necessary to grasp the NVIDIA Graphics Processing Unit (GPU) to understand CUDA as it coordinates the hardware. The Ethernet PCI cores which are modern and NVIDIA are attached to GPUs which have PCIE GPUs containing SATA interfaces to get attached directly to the GPU is still pertaining to the PC with few advanced cores. Modern GPUs embedded to workstations come with thousands of layout cores with different small and specialized processing units which are known as cores. The cores work hand in hand to offer one of the superb NVIDIA GPU flagships speed.

The following describes the main types of cores you will encounter on NVIDIA GPUs:

CUDA Cores: The All-Purpose Workhorses

CUDA cores’ act as the foundation upon which the computational power of an NVIDIA GPU is built. Consider them to be more numerous, yet smaller replicas of CPU cores, designed to run simultaneously.

- Functionality: As mentioned before, CUDA Cores have broad capabilities. They execute various floating-point and integer calculations, and therefore, they can be used in almost all types of parallel computing. When you hear GPU marketing claims of having “thousands of cores,” these are most probably CUDA Cores that are being referred to.

- Applications: They excel in performing graphics and scientific computational simulations, which can be divided into many parallel independent tasks.

Tensor Cores: The AI Specialists

NVIDIA has made one of the most important innovations in the AI (Artificial Intelligence) and deep learning field with the introduction of AI-based Tensor Cores. These are specialized matrix units designed to execute matrix multiplications on matrices which are operated on them.

- Functionality: Neural networks fundamentally consist of matrix multiplication and accumulation. AI training and inference, which require lower precision arithmetic (FP16, FP8), can be performed more efficiently using specially designed Tensor Cores. Within the AI domain, “mixed precision” computations bolster performance, in addition to providing better memory bandwidth and utilization for many workloads.

- Applications: For resource-intensive AI workloads including large machine learning model training, real-time AI inference acceleration, and other resource-intensive tasks, Tensor Cores are indispensable. They are pivotal to the widespread adoption of NVIDIA GPUs in the AI ecosystem.

Ray Tracing Cores (RT Cores)

RT Cores are another example innovated by NVIDIA which widen the graphics realism focus.

- Functionality: Ray tracing is an image rendering technique that governs from the behavior of real-world light. It does require complex computations such as projecting light rays, interaction of objects such as reflection, refraction, shadow creation and coloring of each pixel. RT Cores are specialized in performing complex lighting calculations.

- Applications: With RT Cores, it is now possible to obtain photorealistic real time video game graphics, professional visualizations, and film rendering. These systems enabled detailed real-time lighting, shadows, and reflections on a level previously impossible.

The impressive competence of NVIDIA’s architecture lies in the interoperation of the cores. Thermally Dynamically Enhanced (TDE) Cores manage the high level activities subdivided, and AI tasks are attended by Tensor Cores, while RT Cores are in charge of visuals enhancement. NVIDIA’s unparalleled efficiency on GPUs is owing to a unifying platform CUDA and division of specialized units workload.

CUDA’s set of cores is also an ecosystem designed for NVIDIA GPUs fully exploited by the developers. Such GPUs are NVIDIA’s CUDA architecture which consists of:

A Device’s Core Components: In this case, a computational platform devices can utilize on lower levels directly pulls the devices computational elements, and exercises each instruction in parallel, executing much faster with NVIDIA’s advanced processors.

- CUDA as an API: C, C++, Fortran, Python, and Julia are extended. This permits explicit functions to be defined called “kernels” which parallel execute on the GPU’s thousands of cores.”

- The CUDA Toolkit: This is every developer’s fundamental toolbox. It comprises:

- GPU-accelerated Libraries: CUDA provides pre-optimized libraries for certain computation tasks like linear algebra (cuBLAS), deep learning (cuDNN), fast Fourier transforms (cuFFT), and random number generation (cuRAND). These provide high-level interfaces that utilize GPU acceleration within algorithms without necessitating low-level CUDA programming.

- Compiler (NVCC): An example of this class of tools is the NVCC, which is a CUDA specific compiler that produces native executables for NVIDIA GPUs from CUDA source files.

- Development Tools: Debugging and profiling tools (like Nsight) specifically designed for optimizing CUDA applications.

- CUDA Runtime: The software abstraction layer responsible for managing the execution of CUDA programs on the GPU.

Working of CUDA: The Simplified Flow

- Host and Device: The CPU and GPU device are referred to as the “Host” and the “Device”, respectively, in a CUDA enabled program.

- Kernel Execution: Developers create “kernels” which are functions meant to be performed in parallel and GPUs.

- Data Transfer: The data required for the GPU is first extracted from the host’s memory and moved into the device’s memory.

- Parallel Execution: The host begins the CUDA kernel on the device and, as a result, the GPU CUDA runtime schedules thousands of GPU threads in a hierarchical pattern of Grids of Blocks and Blocks of Threads to execute the Kernel. Each thread carries out the same kernel instructions, but each thread uses different input.

- Result Transfer: After the processing is done, the results are transferred from the device’s memory to the host’s memory.

The parallel action model is what permits the acceleration of performance to become significant. Instead of carrying out tasks in a linear fashion, a group of them can be tackled concurrently, which greatly minimizes processing time for certain workloads that are amenable to this approach.

The Impactful Reasons Why NVIDIA CUDA Is Dominant

The advances by NVIDIA CUDA in computing technology was, and still remains extremely evident. The far-ranging benefits still today continues to impact developments in a number of fields:

- Extreme Parallelism: This single advantage attends to the CUDA benefit which permits the employment of the GPU’s thousands of cores to execute various operations in parallel. For embarrassingly parallel problems, or problems that can be broken down into many small, independent parts, the speedup is often much greater than what is achievable with a CPU.

- Accelerated Computing: CUDA has made it possible for previously impossible or impractical tasks to be accomplished by executing the application’s GPU-intensive portions on the GPU, as it dramatically improves execution time.

CUDA’s initial focus was on graphics, but its applications now span across several fields such as scientific research, modeling finance, analyzing data, and even artificial intelligence.

- Rich Ecosystem: Active developer communities and robust documentation offered by NVIDIA provide CUDA with countless optimized libraries and frameworks. Thus, NVIDIA has built around CUDA a rich ecosystem that boosts development creating powerful apps.

Such tasks include, but are not limited to, parallel execution support or high operational volume tasks where the calculations done by the GPU with CUDA might draw less energy than if done by the CPU.

As with any other software application, there is potential for growth in efficiency, and CUDA based applications can execute across a range of different NVIDIA GPU Server, starting from a single consumer grade GPU to supercomputing cluster.

Applications of NVIDIA CUDA: Specific Areas of Significance

NVIDIA CUDA has had an impact on nearly every sector that utilizes big data or has complex simulations. Here are some of the most notable areas:

- AI and Deep Learning: Performed most often, and perhaps the most profound example of its use. Enables deep learning frameworks like TensorFlow, PyTorch, and Keras to train neural networks for:

- Image and speech recognition

- Language Processing (NLP)

- Enabling Self-driving cars

- Medical imaging

- Compute and Research Scientists

- Climate Change Modeling: Modeling turbulent weather systems and their change over a prolonged time period.

- Dynamics of Molecules: An application is drug design and materials science which involves simulating the behavior of atoms and molecules.

- Astrophysics Simulations: Simulating galaxies, black holes, and other simulating other cosmic phenomenon.

- Flow Analysis in ACFD: Studying the movement of fluids in aerospace and automotive engineering.

- Supercomputers and CUDA: One of the fastest engineering and scientific supercomputers uses CUDA for HPC.

- Data mining: Extracting useful information in less time from databases, processing huge volumes of data for different applications, and with a lot of data.

- Risk assessment: Performed tasks such as analyzing an investment’s financial risk, evaluating options trades, and executing trades via algorithms.

- Computer Generated Graphics: In the domains of animation, gaming, and films, CUDA is employed for advanced spellbinding rendering, real-time ray tracing, simulations, and the intricate accuracy levels physics simulations demand.

- Medical Imaging: In conducting diagnostics and researching medical data, advanced computation accelerates the retrieving, processing, and analyzing processes.

The NVIDIA H100 GPU: A CUDA Driven Powerhouse

NVIDIA’s commitment to CUDA’s extensions for accelerated computing frameworks alongside the innovations made to the NVIDIA H100 GPU showcases the constant advancements made in parallel processing technologies. Designed for the most intensive AI and HPC workloads, the H100 was built on the Hopper architecture, which incorporates NVIDIA’s vast experience with parallel processing with:

- CUDA Cores: Providing a general-purpose parallel computing capacity of tens of thousands.

- Tensor Cores Fourth Generation: For acceleration of AI inference and training, especially for transformers that are the foundation of large language models. With FP8 precision, the H100’s Tensor Cores have the capability of leveraging significant boosts to performance while becoming FP8 precise.

- Advanced Interconnect NVLink: Allowing the H100 to communicate with other GPUs at extremely high bandwidths. Especially important for scaling huge AI models and HPC simulations over multiple GPUs and servers.

- Transformer Engine: The NVIDIA H100 GPU comes with a specialized feature that dynamically adjusts precision for best efficiency in precision intensive frameworks.

- NVIDIA Confidential Computing: An added security layer enabling the users to safeguard the confidentiality and the overall integrity of their data and applications while utilizing GPU acceleration.

With the H100 GPU Servers, NVIDIA continues to lead innovation in hardware and software with its CUDA implementation designed to address the ever-increasing computational needs of the world.

Getting Started with CUDA: Installation and Beyond.

Exploring the scope of GPU accelerated computing begins with determining how to get started with CUDA.

NVIDIA CUDA Installation

- Check for GPU Eligibility: First and foremost, check if you own an NVIDIA GPU that supports CUDA. CUDA compatible NVIDIA GPUs from the GeForce, Quadro and Tesla series are readily available. For a detailed list of CUDA-compatible GPUs and their compute capabilities, visit NVIDIA’s website.

- Install NVIDIA GPU Drivers: With CUDA Toolkit, NVIDIA drivers for your operating system (Windows, Linux, or macOS) need to be compatible and up to date. Direct downloads from the NVIDIA driver downloads page is the best source.

Download and Install the CUDA Toolkit

NVIDIA developer website has a dedicated page for TKs and CUDA toolkit download.

Choosing the CUDA version requires you to select your Operating System, architecture and the preferred version of CUDA. It is a good practice to look at the compatibility matrix for deep learning frameworks like Tensorflow or Pytorch as they tend to have specific CUDA version dependencies.

- Make sure to download the right installer, for example, an executable file for windows or .run files or .deb/.rpm files for linux.

- After downloading, execute the installer and follow the on screen instructions. Install the items crucial cuda toolkit, development libraries and even the documentation.

- Important: Check to see if the installer already sets up environment variables. Default values for windows are CUDA_PATH and PATH while on Linux you have LD_LIBRARY_PATH and PATH.

- For those planning to work with Deep Learning frameworks, you’ll also need to install cuDNN which is the CUDA Deep Neural Network Library.

- As an adjustment, make sure to register as an NVIDIA developer which is free.

- Make sure to download the version cuDNN library that corresponds with the version of CUDA toolkit in your computer.

- The process is rather simple. Most of the time, installation requires extracting the archive and pasting the binaries, header files and libraries into the toolkit directory.

- For cupDNN make sure to add the environment variables for your project that are crucial so your project runs optimally. Confirm Installation:

- As verification, you may either perform a simple CUDA sample program located in the toolkit or check the compiler version using command prompt or terminal: “nvcc -V”.

Key Considerations for Installation

- Incompatibility Issues: Make sure that your NVIDIA driver, CUDA Toolkit, and any other deep learning frameworks like TensorFlow or PyTorch are not version locked. If they do, errors may arise.

- Minimum Requirements: Check that your device meets the bare minimum for the CUDA Toolkit alongside other specifications such as disk space and RAM.

- Operating System Variations: Different operating systems may have a slight variation to the installation steps. Always look up the NVIDIA CUDA installation guide for your Operative System so you can receive the most accurate information.

- Cloud Based Services: For those using AWS, Azure, or Google Cloud, they usually feature GPU instances that are already equipped with CUDA and other needed libraries which streamlines the configuration work.

The Advances of Parallel Computing

In the past, performance barriers limited computing powered innovation across various fields. NVIDIA CUDA, on the other hand, has dramatically transformed the parallel computing landscape. With the introduction of CUDA, its GPUs powered new forms of AI with the aid of Tensor Cores, and later provided immense realism with Ray Tracing Cores, all while being integrated into newer GPU architectures.

The need for computing power is growing faster than ever, particularly due to the emergence of generative AI and advanced simulations. Now more than ever, the parallel computing powered by NVIDIA CUDA becomes increasingly more useful. CUDA is capable of unlocking the full potential of NVIDIA GPUS and gives researchers, developers, and innovators the ability to drive GPU technology forward, enabling the world to accelerate discovery and transform unprecedented computational power.