Think of a symphony orchestra rehearsing a complex piece. Each musician plays their part, and a conductor is needed to manage rhythm, balance, and accuracy. In computing, bare metal virtualization fulfills these functions, guiding highly sophisticated computing resources with no other system delays. It does more than manage virtual machines (VMs); It intricately orchestrates the precise trimming of workloads.

To elaborate, a bare metal virtualization hypervisor is installed on bare metal machines without any traditional Operating System (OS) layer. Therefore, a system’s CPU, memory, and storage resources are allocated to it in a more straightforward and latency-sensitive manner. This is critical for AI, ML, and other data-centric workloads omnipresent in enterprise settings.

This is the complete opposite of hosted virtualization, where the hypervisor layer sits on top of the OS. This leads to Increased Overhead and the hosted virtual machines performance suffers as more processes are running with the OS. This differentiation is critical as more organizations strive for hyper scalability with efficiency. In this blog, we are going to bare metal virtualization, mechanism and its usage.

What Is Bare Metal Virtualization?

Bare metal Virtualization is a type of virtualization whereby a hypervisor is directly installed onto a server without a host operating system. In contrast to hosted virtualization or Type 2 hypervisor hosted on operating systems like Windows or Linux, bare metal hypervisors do not need the operating systems for virtual machines.

With this type of bare metal hypervisors, as with the other systems, the hypervisor is the first and the only interface. It interfaces with the virtual machines and the physical hardware which consists of a Windows server, microprocessors, memories, drives, and network interfaces.

Explaining the Architecture

Bare metal virtualization, also known as Type 1 virtualization, involves installing a hypervisor directly on the server’s hardware, bypassing the need for a traditional operating system. This approach offers performance benefits and increased control for resource-intensive applications.

The following outlines the architecture:

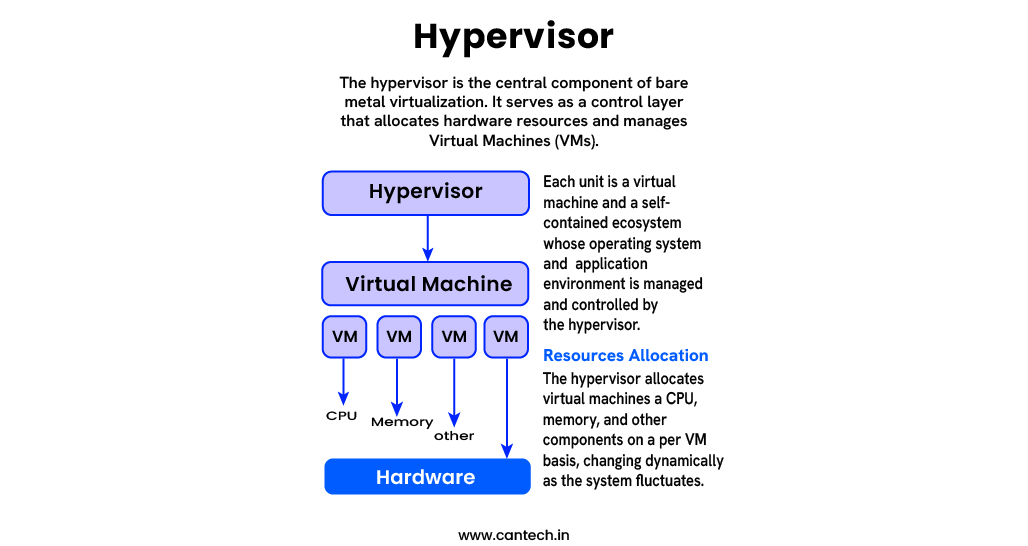

Hypervisor

- The hypervisor is the central component of bare metal virtualization. It serves as a control layer that allocates hardware resources and manages virtual machines (VMs).

- “Bare metal” hypervisors also known as Type 1 hypervisors includes VMware ESXi and Microsoft Hyper-V.

- They converse with the hardware and bypass the inefficiencies of a host operating system.

Virtual Machines

- Each unit is a virtual machine and a self-contained ecosystem whose operating system and application environment is managed and controlled by the hypervisor.

- In bare metal virtualization, virtual machines (VMs) have direct access to underlying hardware resources, leading to better performance than seen in hosted virtualization.

Resource Allocation

- The hypervisor allocates virtual machines a CPU, memory, and other components on a per VM basis, changing dynamically as the system fluctuates.

- This ensures optimal hardware resource utilization, and the system is capable of scaling resources vertically and horizontally depending on the workload.

Primary Benefits of Bare metal Virtualization

Improvements to Cloud Virtualization

Cloud virtualization enhances performance through the dynamic allocation of resources. Bare metal virtualization is of cloud nature, which means real-time retargeting is possible with minimal resource allocation waste to the user. For high-frequency transactions in AI, ML remote systems, big data analytics, and other performance-sensitive workloads, Bare metal real-time tuning is highly beneficial. The performance improvement with response rates is significant.

Further Enhancements to Security

Minimal control attempts with OSes as Hypervisors stems from the positioning of Secondary OSes. This defines risks associated with OS based compromises as lower with bare metal virtualization. The hypervisor having a sole function as the control layer simplifies the design of the upper OS, freeing it from excess, making the upper OS a smaller obstacle to overcome. So attacks designed to take advantage from this layer become less useful.

Improvements in Expense Reduction

Virtualized machines gain more CPU time without the overhead of an OS resource manager juggling numerous VMs. Resource allocation becomes simpler without juggling lower CPU time. This increases efficiency, to operating costs, Electronically, this improves server consolidation, enabling the processing of more VMs on fewer physical servers. In traditional systems, this improves the ability to merge physical systems and servers.

Stripping the OS layer out gives users unmatched bare metal flexibility, control, and performance in critical environments. In a secured environment, superior control allows automation, yielding improved resource allocation and slimmer excess management in interfaces. This simplifies the complex process of manually estimating and circumventing CPU counting.

Understanding the Bare metal Hypervisor

The hypervisor, as the software layer interfacing the physical components of a machine, virtually enables bare metal virtualization. Its key functions include resource partitioning, allocation, and management in support of multiple operating systems on a single machine.

Like in hypervisor-based virtualization, bare metal hypervisors still allocate and manage hardware resources for a particular server. They partition and allocate a machine’s resources: physical CPU, RAM, hard drive, and even network bandwidth, to be granted to virtual machines based on need, precedence, and a set criteria for each VM. Every virtual machine operates completely independently and fully autonomously on simulated hardware and software, unaware of other virtual machines sharing the host.

Bare metal hypervisors maintain and manage virtual machines with a finely tuned resource management system. They ensure the optimal operational state of virtual machines by analyzing patterns and anomalies in resource allocation with historical and current performance metrics. Predictive and prescriptive optimal resource allocation—adjusting for expected bottlenecks—are handled proactively, and resources are optimized and adjusted as necessary.

Key Functions of Bare metal Hypervisors

Resource Scheduling

Hypervisors are also responsible for managing the host systems with a scheduling system, distributing virtual processing resources to the host systems and controlling the virtual machines both in real-time and on a timetable.

Each virtual machine is provisioned with a distinct set of virtual resources to be processed individually. In the event of system overload, throttling is applied to less important virtual machines, while critical virtual machines are prioritized. During peak periods, important virtual machines are prioritized and less important virtual machines are throttled to help maintain system responsiveness and stability.

Memory Management

The management of system resources such as memory in a virtualized environment is very critical. Hypervisors employ memory ballooning and transparent page sharing to optimize virtual machine memory. These methods help a system regain memory that has been allocated to a VM and, in turn, allocate the memory to another VM. This increases efficiency and scalability.

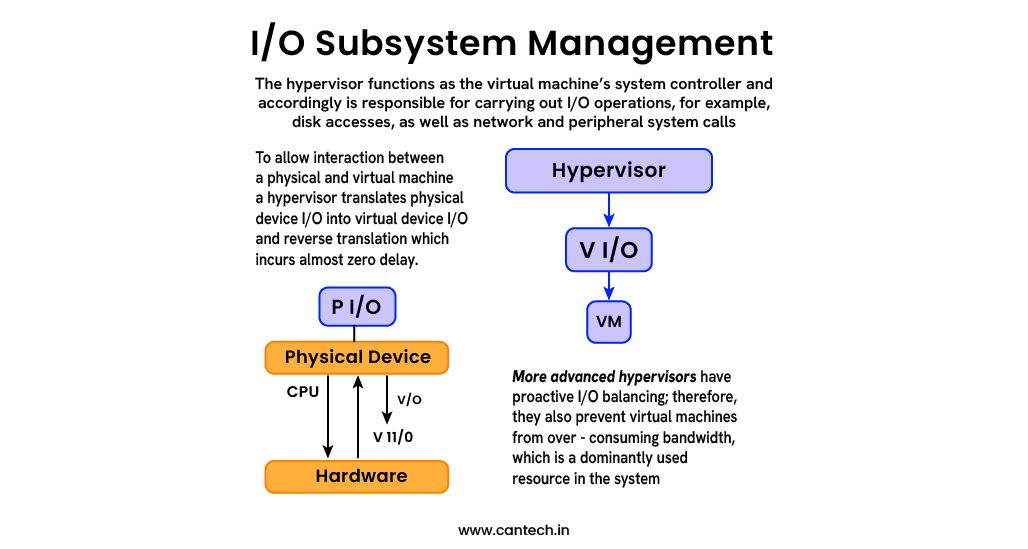

The hypervisor functions as the virtual machine’s system controller and accordingly is responsible for carrying out I/O operations, for example, disk accesses, as well as network and peripheral system calls. To allow interaction between a physical and virtual machine, a hypervisor translates device I/O corresponding to a physical device P I/O into virtual device I/O and reverse translation which incurs almost zero delay. More advanced hypervisors have proactive I/O balancing; therefore, they also prevent virtual machines from over-consuming bandwidth, which is a dominantly used resource in the system.

Examples of Prominent Bare Metal Hypervisors

VMware ESXi

- Overview: This hypervisor is well-known in the enterprise sector as it has proven to be dependable over the years.

- Strengths: The VMware ecosystem (vSphere, vCenter, etc.) increases the stability and number of features of the hypervisor.

- Use Cases: It is designed for enterprise-grade virtualization, supporting large data centers and hybrid cloud environments.

- Notable Features: The VMware vSphere ecosystem gives the user advanced features like advanced live and vMotion, HA, and extensive third-party integrations.

Microsoft Hyper-V

- Overview: It is one of the powerful hypervisors also incorporated in the Windows Server.

- Strengths: Operating in a Microsoft environment maximizes effectiveness with Windows products.

- Use Cases: Windows-based businesses, small and medium enterprises, as well as, development and testing centers.

- Notable Features: Hyper-V has replication capabilities with direct live migration and dynamic memory.

KVM (Kernel-based Virtual Machine)

- Overview: This is Linux’s native hypervisor as it is embedded in the Linux kernel.

- Strengths: Cost-free, customizable, and has support from cloud infrastructures like OpenStack.

- Use Cases: Companies offering cloud services, DevOps support firms, and enterprises with skilled Linux staff.

- Notable Features: Linux’s workload excels in performance and has highly scalable virtualization with low system overhead.

Bare metal vs. Hosted Virtualization: A Performance Showdown

System architectures do not share the same virtualization. There are two primary categories, bare metal or hosted, each with differing objectives and environments. Having some structure to understand these may help grasp their impact on performance, security, use cases, and more.

Why This Distinction Matters, Especially for AI & ML

AI and ML workloads are known to utilize resources aggressively. Hosted systems are frequently more restrictive than helpful with virtualization due to the high demand for rapid data processing, real-time calculations, cross communications, and minimal lag.

Below is how bare metal is particularly useful:

Unmediated Access to Hardware accelerators

In software that is designed for Artificial Intelligence (AI), Machine Learning (ML), or similar domains, the use of peripheral computing accelerators such as GPUs, TPUs, or FPGAs is common. With bare metal hypervisors, these peripherals can be granted unmediated access. This provides the bare metal hypervisor with maximum throughput.

Reliable Outcomes

Achieving reliable outcomes is particularly important for machine learning systems. In hosted environments, there is usually background OS activity that disrupt the system’s resource consumption. This is not the case with bare metal systems.

Cybersecurity Essentials

In domains such as healthcare and finance that deal with sensitive data and using AI and ML extensively, bare metal systems provide stronger separation of workloads with better isolation that reduces the risk of data exfiltration or inter-process communication.

Scalability for the cloud

Providers such as Amazon Hyperscalers, Google and Microsoft provide bare metal offers, which help businesses that require unmatched performance during complex model training or large-scale inference tasks, thus achieving bare metal cloud scale performance.

When to Use Each

Choose bare metal server if

For businesses running enterprise grade databases, deep learning systems, or complex compute workloads, which require performance, security, and unparalleled scalability.

Choose hosted if

- For those working on new, experimental, or low-priority applications and on virtual environments.

- Hosted solutions have minimal resource requirements, are easily accessible and are best suited for these smaller scale activities.

The Function of Virtual Machines and Containers in Contemporary Systems

In the information technology and computer science industry, most companies make use of Virtual Machines and Containers to develop, scale, and manage applications in the most efficient manner. Although applications of VMs and Containers enhance workload isolation and maximize resource utilization, they differ completely in structure, performance, and use cases.

To efficiently and thoroughly understand their functions, we will examine their breakdown overview.

What Are Virtual Machines (VMs)?

Virtual Machines are a separated computing environment within a computer system that simulates an entire physical computer, making them completely isolated and independently proven. Each VM can independently execute a full Windows or Linux operating system along with any required applications or libraries. The (bare metal or hosted) hypervisor controls the system resources of a CPU, RAM, and storage, allocating them to the VMs which operate akin to physical servers.

Key Characteristics of VMs

- Stack environments completely: OS and application together with dependencies

- Tight security and isolation in environments

- Various OS types in a single hardware platform

- More overhead originating from multiple entire OS instances

What Are Containers?

A unit that is both lightweight and portable is used to host applications and their dependencies. Unlike VMs, containers are more efficient when it comes to resource consumption and speed since their only requirement is the host system’s kernel.

Key Characteristics of Containers

- Use the host OS kernel (typically Linux)

- Quicker to start or stop and lightweight

- Perfect for cloud-native and microservices.

- Less isolated and have lower overhead than VMs.

To know more about Containers, read our in-depth guide on What Are Containers?

VMs and Containers: Better Together

While containers and VMs may seem like different opposing technologies, in most cases, the two work in tandem. One of the more common deployment strategies today is to use VMs to host containers.

Why? Merging the two technologies to transform them both into their best self.

The security and isolation provided by the use of VMs is one of the most notable advantages. In the example of a VM with multiple containers, the strong isolation means that the VM is secure, as the containers are shielded from one another when they need to be.

- Speed and Agility from Containers: The deployment of containers is instantaneous, making them suitable for microservices as well as CI/CD pipelines.

- Operational Flexibility: While VMs grant standardized infrastructure to the teams, portable containers can house the applications.

- AWS, Azure, and Google Cloud are examples of cloud hyperscalers that provide and orchestrate Kubernetes clusters in virtual machines as part of their hybrid offerings for enhanced security and scaling reliability.

Also read our Containers vs VM to understand the difference between both.

Key Technologies: Docker and Kubernetes

Docker expedites the containerization process by establishing a reproducible benchmark for the containers’ deployment and management, thereby facilitating the “build once, run anywhere” paradigm and streamlining workflows.

Kubernetes runs sophisticated automated orchestration of containerized applications. With Kubernetes, companies are able to automate the deployment, scaling, load balancing, and self-healing of their applications. Kubernetes is best suited to work with virtual machines as they provide secure, multi-tenant environments.

Read the guide on what is kubernetes.

Why Is This Important in the Current Stack?

In the context of DevOps, cloud computing, and AI/ML workflows, speed and reliability are of utmost importance. While VMs offer security and stability, containers are far more agile. Furthermore, the use of containers within VMs allows organizations to:

- Emphasize the importance of security within multi-tenant environments.

- Safeguard the infrastructural support while dramatically increasing the scale of applications.

- Ensure streamlined application workflows in the development, testing, and production cycles.

Why Virtualization is Always Important in AI and Machine Learning

Like algorithms, emerging technologies like bare metal virtualization frameworks are equally important to the AI/ML technologies themselves. New AI/ML workflows are relying more and more on bare metal virtualization because of its unmatched performance and isolation.

Analyzing AI/ML workflows will reveal how virtualization can be leveraged to boost productivity for operational excellence.

Resource Sharing with High-Performance Hardware

Performance with resource access is critical when running complex algorithms. AI and ML algorithms require immense computational power often utilizing TPUs and GPUs. Access and management improves dramatically when these costly resources are made available to teams and users through bare metal virtualization while maintaining performance.

A Type 1 hypervisor makes it possible for a single GPU server to be shared by multiple users. As a result, several virtual machines are able to access a single GPU server concurrently, allowing for simultaneous testing, training, and full resource utilization of the GPU.

AI and sophisticated hardware presents the highest return on investment and enables perpetual scaling for AI teams, devoid of resources.

The sensitive nature of algorithms, customer information, and critical business logic makes AI and ML technologies even more pertinent for the healthcare, finance, and defense industries that prioritize secrecy and business model protection.

Isolation and Secured

The use of virtual machines offers strong safeguards and separations for every user, making them ideal for AI training in a secure environment. Each virtual machine operates independently and manages its own policies on security, authentication, and data storage, allowing for multiple virtual machines on a single physical machine to be protected from one another.

Consider the case of a bank training its virtual fraud detection systems: these air-gapped systems offer unbreachable security for data privacy and regulatory compliance.

Scalability and Reproducibility

Within AI Research, scalability and reproducibility are critical, and virtualization technologies are most useful for these two areas.

- Scalability: AI Models require evaluation and training, and these functions can be vertically scaled and later de-scaled, allowing for equilibrium. Furthermore, peak workloads can be flexibly met at great scale.

- Reproducibility: The reproducibility problems caused by the “it works on my machine” syndrome are easily solved through VM spin-up. VM spin-up allows for total automation of environmental control for code, libraries, and setup. Perfect isolation can then be achieved, which strengthens scientific reliability.

Glossary: VM spin-up” refers to the process of creating and starting a virtual machine (VM).

Case Study:

Removing the limits on the use of GPU servers allows the entire data science team to work on the classification and processing of the medical images. With the use of virtual machines, team members are no longer constrained by library version conflicts. Each team member can run PyTorch and TensorFlow on separate VMs, greatly simplifying environment cloning. Thus, the need for machines with hyper-optimized configurations no longer exists.

Explore the full article on What is PyTorch.

Conclusion

When interfacing with hardware and physical access is a priority, bare metal virtualization is unparalleled. For these particular scenarios, bare metal solutions are still most commonly adopted due to unmatched performance, streamlined access to resources, and efficiency in operations. Hosted virtualization cannot capture these bare metal benefits because the need to streamline access adds performance degrading layers.

From our observations, bare metal hypervisors provide optimal resource allocation, secure workload isolation, and centralized visibility over the infrastructure which are all critical hypervisor advantages. Hosted hypervisors benefit from powerful hardware which in turn allows them to enforce strict reproducibility, boundary enforcements, and resource allocation which are crucial to AI and Machine Learning workflows.

With ongoing innovations and the integration of virtualization in bare metal solutions, its contribution toward security and rising demand is expected to increase in importance. These technologies will enable cloud computing as the foundation for advanced scientific research and exploration.

With these foundational shifts, AI at the edge, quantum computing, and even federated learning will augment the virtualization needed as a resource for digital transformations.