Powerful compute is needed in the development of AI, deep learning, and large model training. Unless one has the money to buy high-end GPUs such as NVIDIA A100 or RTX 4090. Cloud GPUs allow developers, start ups, researchers, and businesses to have on-demand access to a scalable hardware without a long term investment. This guide would provide insights into the functionality and advantages of the process of renting GPUs, GPU pricing, and the selection of the most suitable GPU solution in India.

What Does It Mean to Rent GPUs for AI?

Rent a GPU implies getting to high-performance graphics processing units which are hosted in the cloud and are pay-per-use. A GPU is an instance of a cloud service that you deploy and pay only on a per-use basis instead of buying physical hardware, which is typically used only during your project.

Cloud GPU rental is widely used for:

- AI model training

- Deep learning experiments

- Machine learning research

- Large-scale inference

- Data science and analytics

- Workload in computer vision and NLP

You have a super speedy portal to enterprise level infrastructure with minimal capital expenditure.

Why Rent GPUs Instead of Buying?

1. Zero Capital Expenditure

GPUs such as NVIDIA A100 or NVIDIA RTX series are very costly. There are also servers, cooling, networking and maintenance in addition to the hardware cost.

By renting, you can avoid the initial investment in infrastructure and change your costs to operating ones.

2. Pay Only for What You Use

Cloud GPU rental follows an hourly or minute-based pricing model. This is ideal for:

- Short-term AI projects

- Training bursts

- Experimentation

- Research workloads

You can start and stop instances anytime to control costs.

3. Instant Scalability

In case you need many GPUs in your training job you can scale in real time. Need 4 GPUs today and 16 tomorrow? Hardware procurement is not delayed. This is essential to AI teams operating with large datasets or LLMs.

4. Access to Latest GPU Technology

There is no need to upgrade hardware frequently as cloud providers provide the latest GPUs. That will guarantee an accelerated training period and improved performance.

How GPU Rental Pricing Works

GPU rental pricing depends on several factors:

- Type of GPU selected

- Number of GPUs required

- Storage and bandwidth usage

- Duration of usage

- Location Region or data center location.

Most providers offer:

- Hourly billing

- On-demand plans

- Allocated funds to cost considerations.

- Custom enterprise pricing

The key advantage is cost control. You stop paying when the instance is shut down.

Who Should Rent GPUs for AI?

Startups and AI Companies

Start-ups in the initial stages do not have the resources to invest in heavy infrastructures. By renting GPUs, they are able to develop very fast without any financial constraint.

Data Scientists and Researchers

Scholars and research groups will have access to powerful processors to train models without having to invest in costly infrastructures.

Enterprises Scaling AI Projects

Massive production AI workloads make dynamic use of GPUs in large organizations to satisfy demand.

Developers Testing AI Models

Developers experimenting with new models can spin up short-term GPU instances for training and validation.

How to Choose the Right GPU Rental Provider

When evaluating cloud GPU services, consider:

- Transparent pricing

- Latest GPU availability

- High-speed SSD storage

- Low latency networking

- 24/7 technical support

- Data center location in India for reduced latency

Another aspect to check is whether the provider has AI frameworks and containerized deployments that are easier to manage models.

High-Performance NVIDIA GPUs Available at Cantech for AI Workloads

Cantech offers NVIDIA GPU is offered as an enterprise-grade rental service, which is used to train AI and perform deep learning, machine learning inference, generative AI, rendering, and high-performance computing. Cantech provides scalable cloud GPUs to any workload whether you are creating a large language model, a set of computer vision pipelines, or even in production.

All the available NVIDIA GPUs in Cantech are listed below in a detailed manner.

The H200 offers high memory capacity and bandwidth needed to handle high workload due to its construction to support large-scale AI and foundation models. Best in large-scale AI training and processing of massive data.

A powerful AI accelerator designed for training and deploying large language and deep learning models. Delivers exceptional performance with advanced Tensor Core technology.

An established business-level AI production and deep learning graphics card. It enhances multi-GPU scaling and decreases model training.

Widely used for AI research and HPC tasks, the V100 provides stable Tensor Core performance for mid to large-scale workloads.

The L40S is a hybrid workload with a powerful AI compute and a graphics accelerator that is optimized to use generative AI and rendering.

An efficient and affordable neural network and AI APIs implementation in real-time. Appropriate in startups and edge deployment.

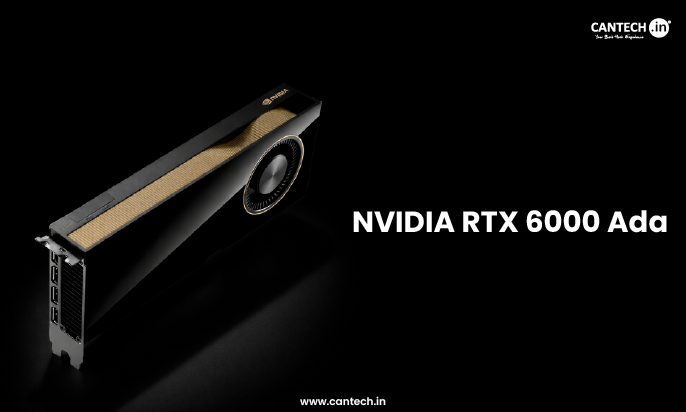

Has high VRAM memory and professional-level AI acceleration. Optimal with deep learning and simulations that require a lot of memory.

Trade-offs performance and cost, which is also appropriate with AI team expansion and medium-size ML projects.

Designed for AI development and data science workflows, it provides stable performance for moderate workloads.

Aids AI prototyping, inference, simulation, and creative loads. The most viable alternative to development and testing.

PyTorch Optimized GPU Instances

Cantech also offers pre-prepared GPU environments based on popular AI frameworks such as PyTorch and TensorFlow which enables teams to deploy and train models in a short time.

Choosing the Right GPU for Your AI Project

Selecting the right GPU depends on:

- Model size and complexity

- Dataset scale

- Training time requirements

- Budget constraints

- Production or experimentation needs

For large language models and enterprise AI: H200, H100, and A100

For research and advanced training: V100, RTX A6000, RTX A5000

For inference and cost-sensitive workloads: T4, RTX series

For generative AI and rendering: L40S

Why Rent NVIDIA GPUs from Cantech?

Cantech cloud GPU solutions enable businesses and developers to develop, test, and deploy AI models without buying physical hardware.

Cantech can deliver performance, flexibility and reliability to your current AI workloads whether you require a single RTX vendor to be developed or multiple H200 vendor to be trained.

Cantech provides:

- On-demand GPU rental

- Scalable multi-GPU clusters

- Flexible hourly pricing

- High-speed SSD storage

- Secure cloud infrastructure

- Fast deployment within minutes

Benefits of Renting GPUs for AI in India

To the Indian businesses and developers, locally renting out GPUs offers:

- Lower latency

- More adherence to data regulations.

- Faster support response

- Affordable price relative to international companies.

It also removes the delays in imports and hardware purchase complexities.

Conclusion

Scalable AI training and inference GPUs should be available via renting, which is a cost-effective solution to today’s AI workloads. Businesses and developers do not have to spend substantial amounts of money on hardware which can become obsolete, but they can have access to the mighty cloud GPUs when they need them.

The freedom and performance needed to win whether you are developing machine learning models, training deep learning networks or deploying AI applications at scale, GPU rental is the solution.

The future of AI is cloud based, scalable and on demand. Renting GPUs is not a mere cost-saving method. It is a strategic advantage.

FAQs on Renting GPUs

Is GPU Rental Secure?

Yes, GPU rental is secure with a trusted provider. They offer encrypted storage, isolated environments, access controls, and secure networking. Always choose a provider that follows strong security and compliance standards.

How Fast Can I Get Started?

Most cloud GPU platforms allow deployment within minutes. Pre-configured images with frameworks like TensorFlow and PyTorch simplify setup.

Can I Scale Up During Training?

Yes. You can add GPUs or move to larger instances based on workload requirements. This flexibility helps avoid bottlenecks during model training.

Is GPU Rental Cost-Effective for Long-Term Use?

For continuous workloads, reserved plans often reduce costs significantly. For variable workloads, on-demand pricing is more economical than buying hardware.